Significance Testing in Dashboard Widgets

What's on this page

About Significance Testing in Dashboard Widgets

Dashboards can help you understand whether the differences you see over time or between groups are statistically significant, and therefore worthy of driving important business decisions. For example, you may have found yourself asking the following:

- Did the NPS really go up this month, or is it a small change that’s just noise in the data?

- Does the Midwest group actually have higher satisfaction scores than the West group?

- Which of my 5 segments had higher or lower than typical scores on this metric?

With significance testing, you can discover which data changes matter most.

Available Widgets and Metrics

Significance testing is currently available in the following widgets, with the following parameters. We’ll go over these in more detail in the following sections.

Widgets

Qtip: Significance testing is also compatible with any type of dashboard that supports the widgets listed above, including CX, EX, BX, and Results Dashboards.

Metrics

- Average

- NPS

- Top / bottom boxes

- Subset Ratio Qtip: In order to conduct the significance test, the numerator must be a subset of the values selected for the denominator. Additionally, the ratio must be less than one.

- Custom metrics

Qtip: When testing significance across time periods, the x-axis/y-axis dimension should be a date field. When testing significance across values the x-axis/y-axis dimension should be a non-date field.

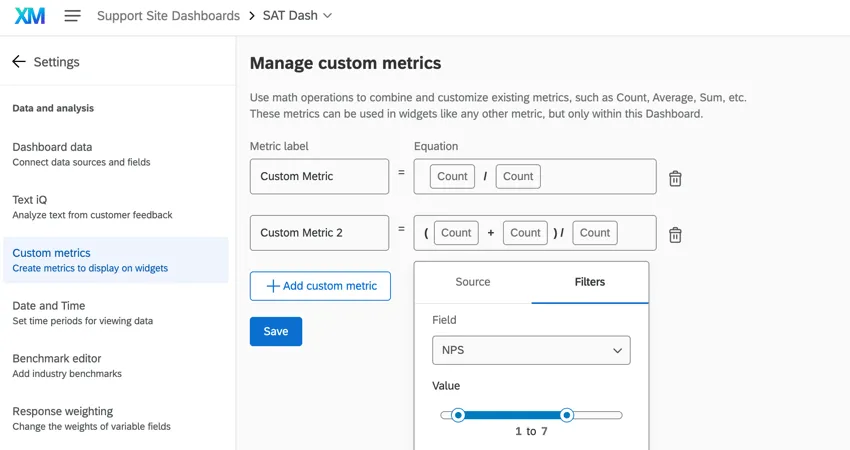

Qtip: In some legacy widgets, one type of custom metric can be used with significance testing: proportional custom metrics with a single field as the divisor. Proportional custom metrics follow the general format of (A + B) / C where A, B, and C are different data fields. A / B also works, since there is just a single field as the divisor. When creating custom metrics for this purpose, you can only use counts, as shown below. There cannot be static number in the equation; e.g., (A + 5) / B will not work.

However, custom metrics, including proportional custom metrics, are unsupported in the majority of newly created widgets.

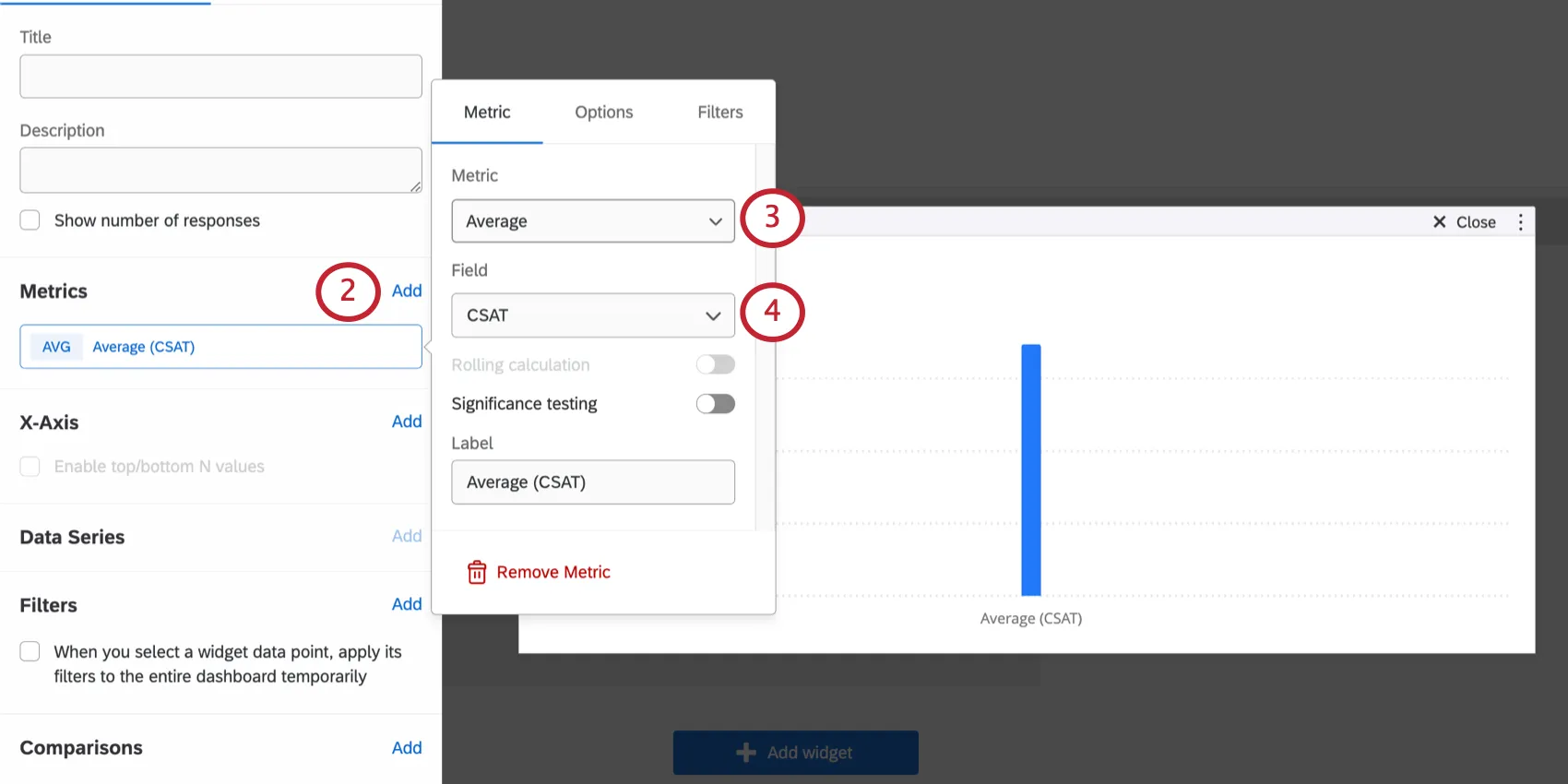

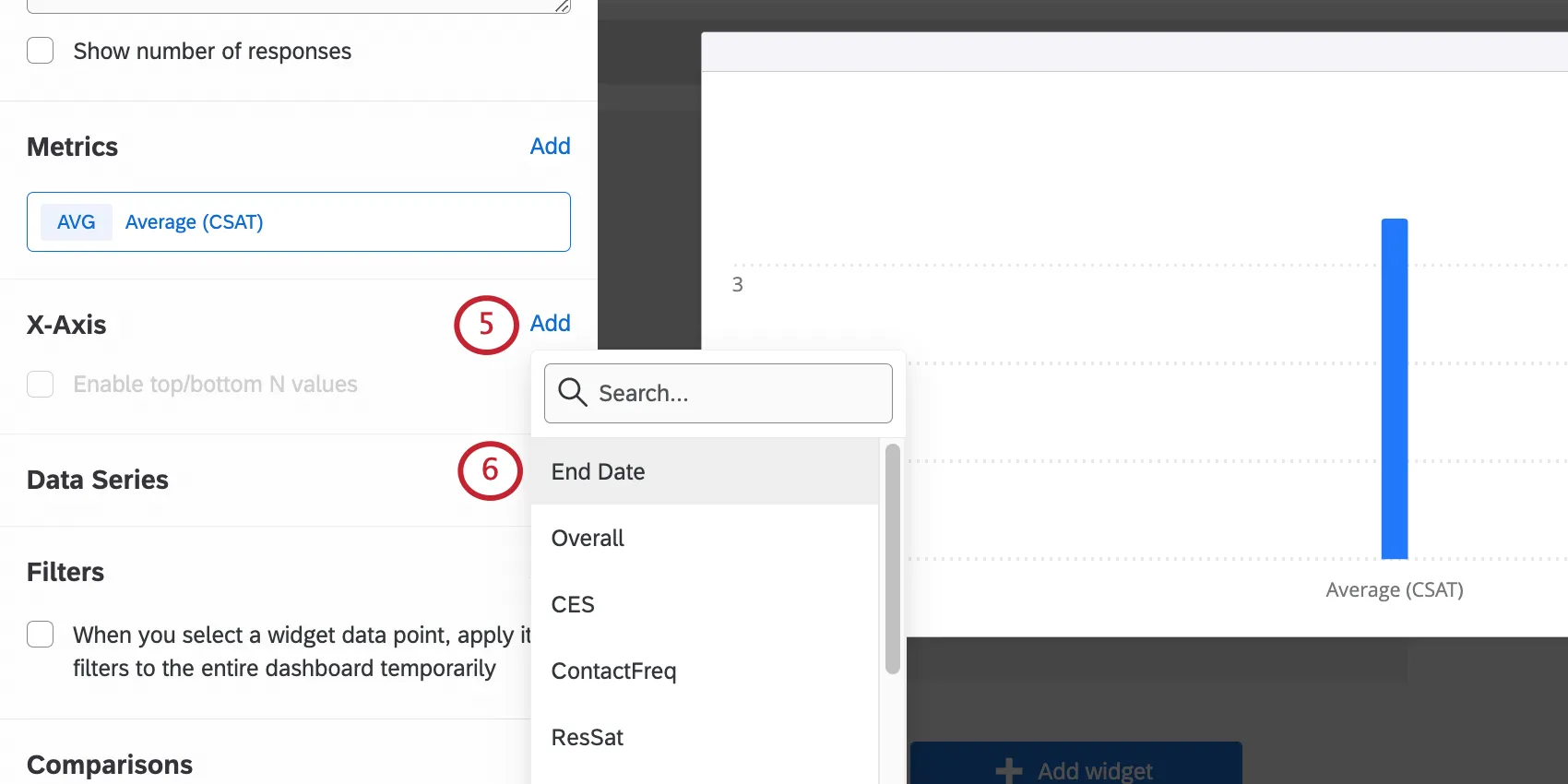

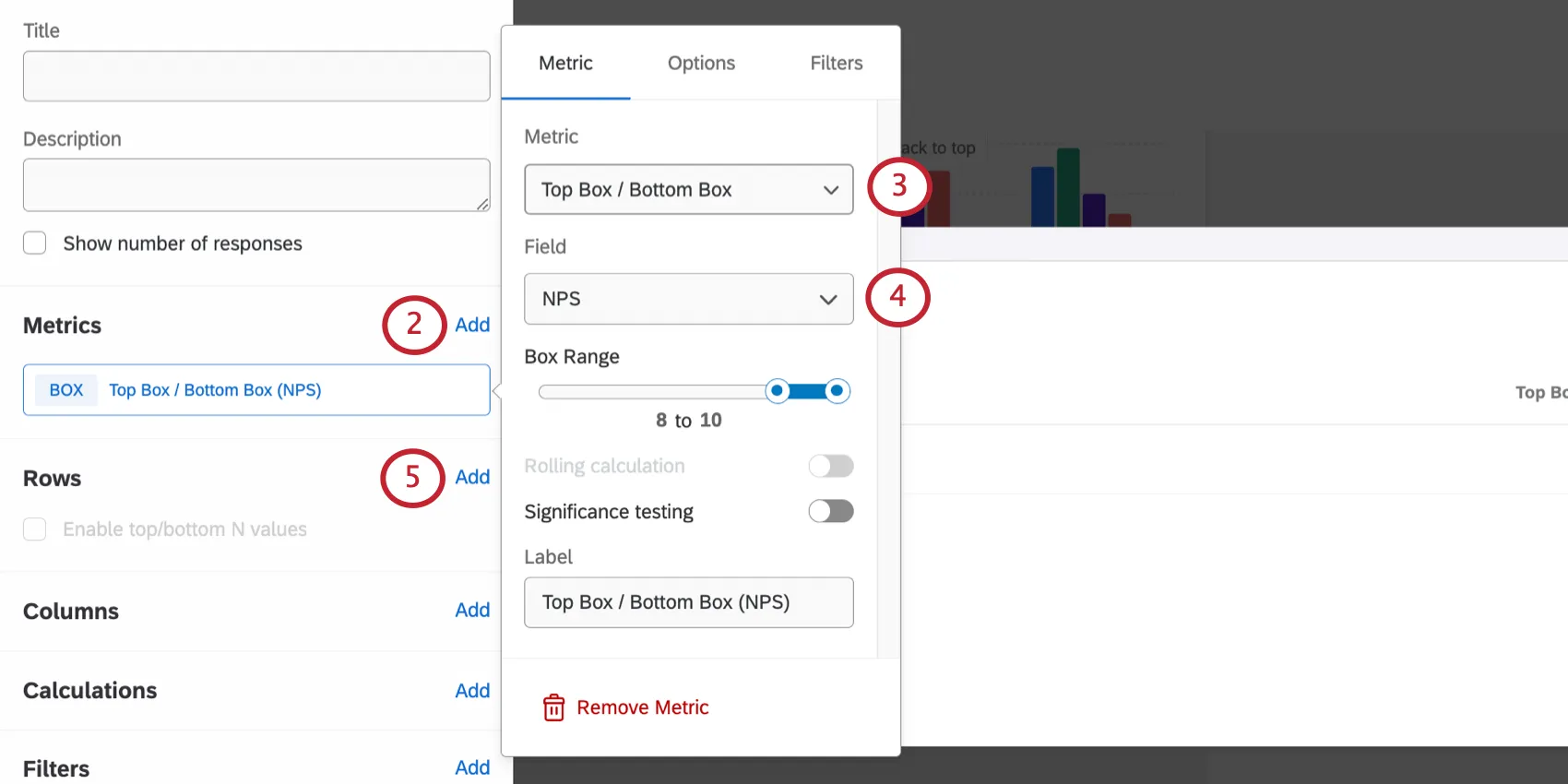

Setting Up Line and Bar Charts

For details on each of these options, see Configuring Significance Testing.

Setting Up Tables

For details on each of these options, see Configuring Significance Testing.

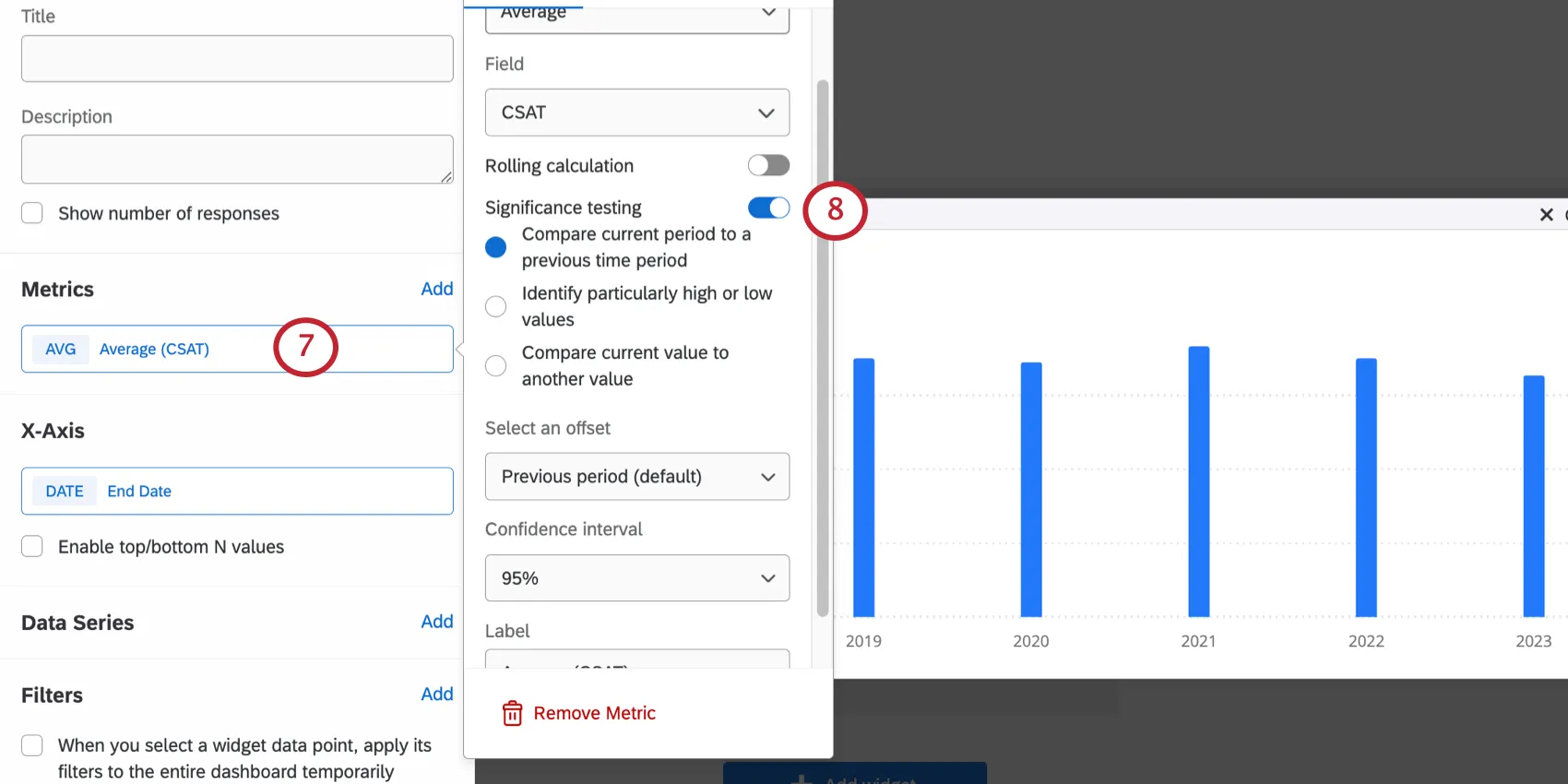

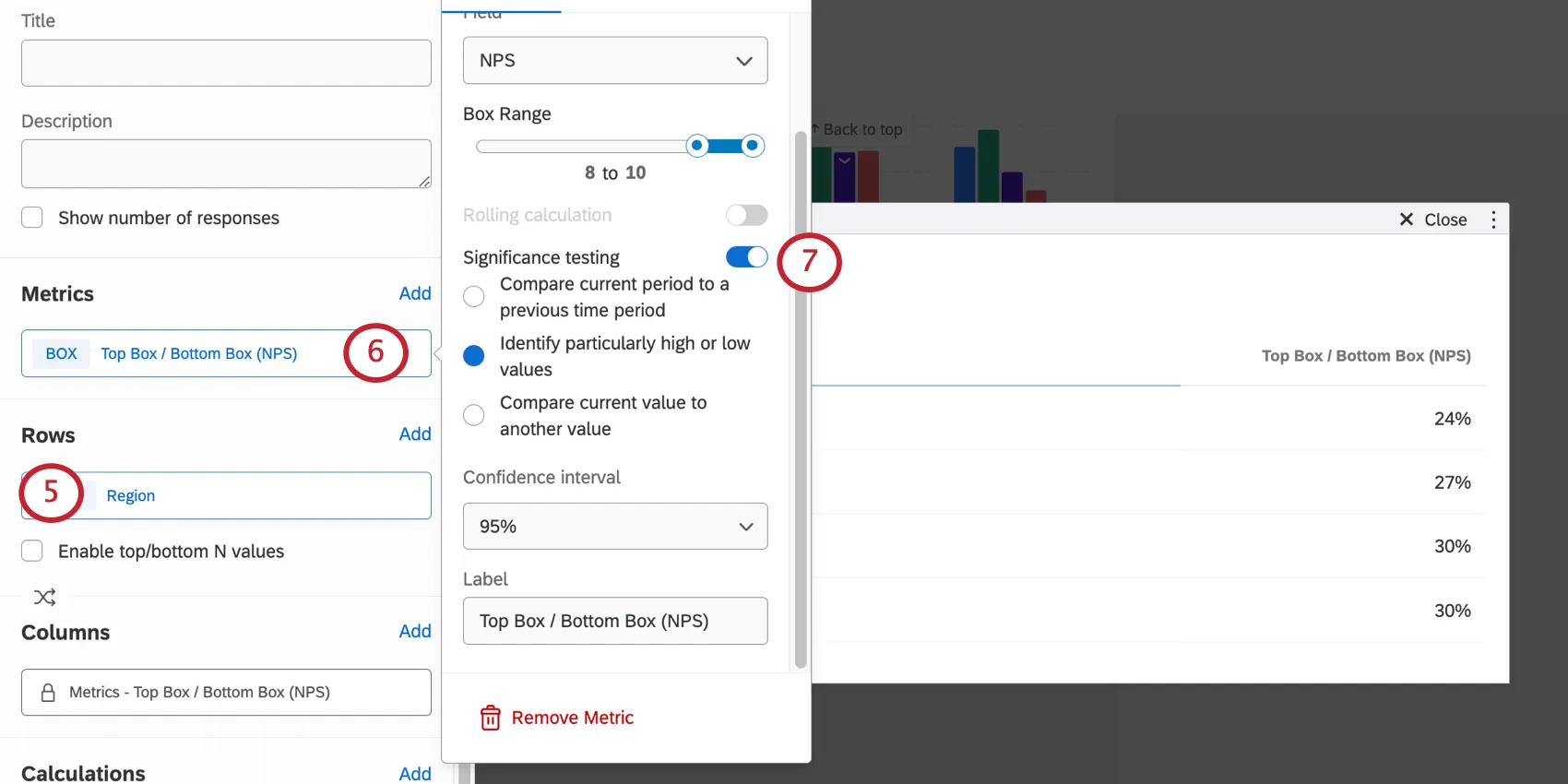

Configuring Significance Testing

Once you’d set up your line chart, bar chart, or table and turned on Significance testing, you’ll have a few options to choose from.

Comparing Significance Across Multiple Dimensions

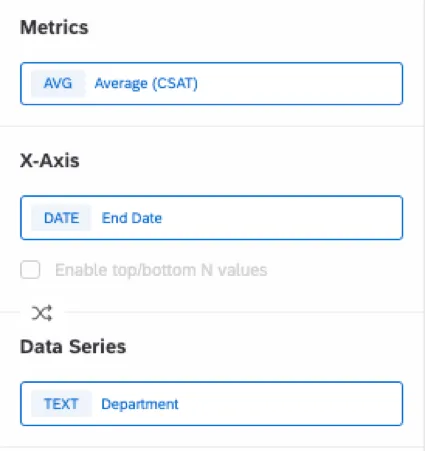

You can compare significance across multiple dimensions by adding a metric, x-axis, and data series. In order for this to work, you need to make sure that:

- The x-axis field is a date field.

- The data series field is a non-date field.

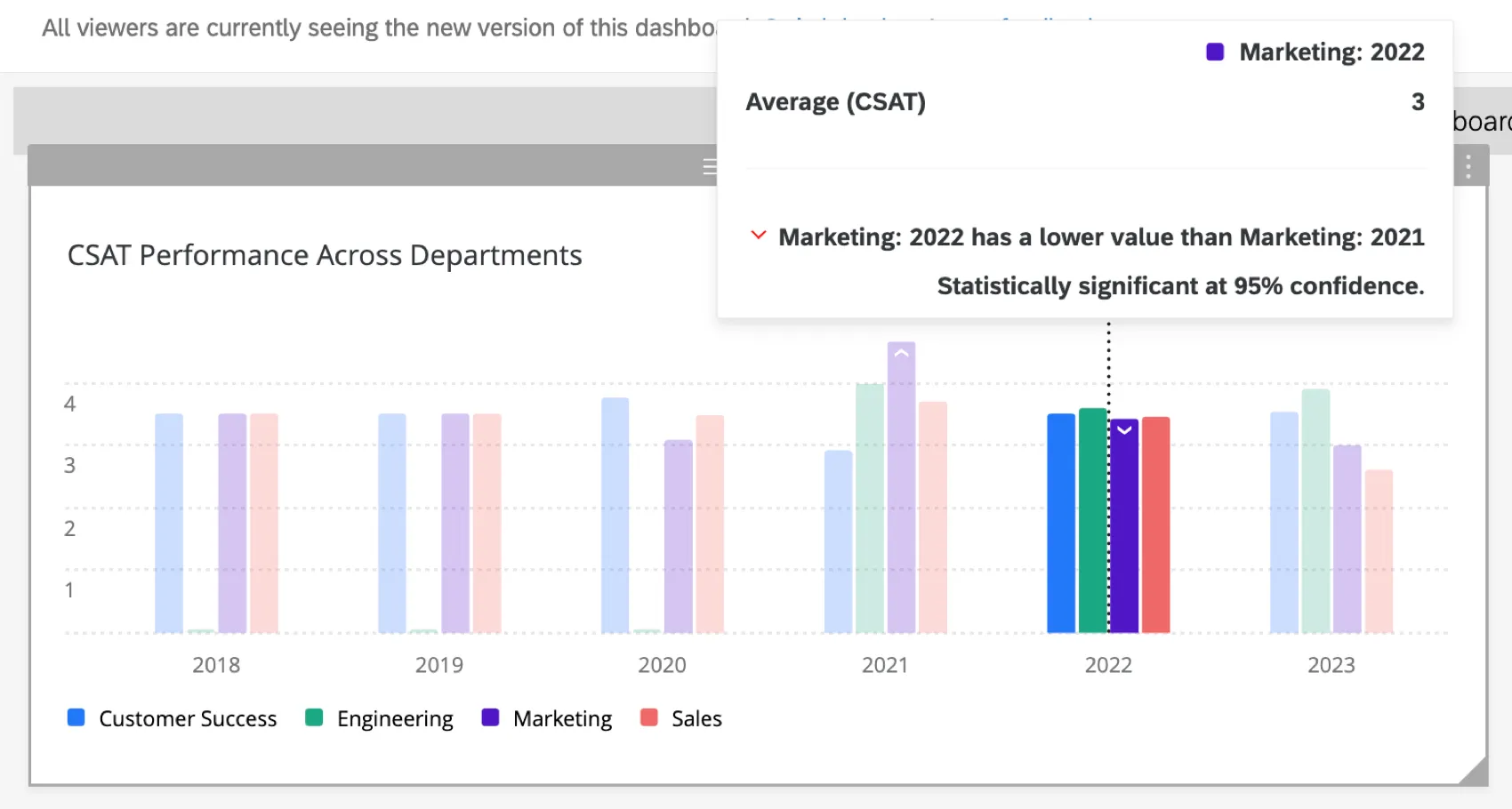

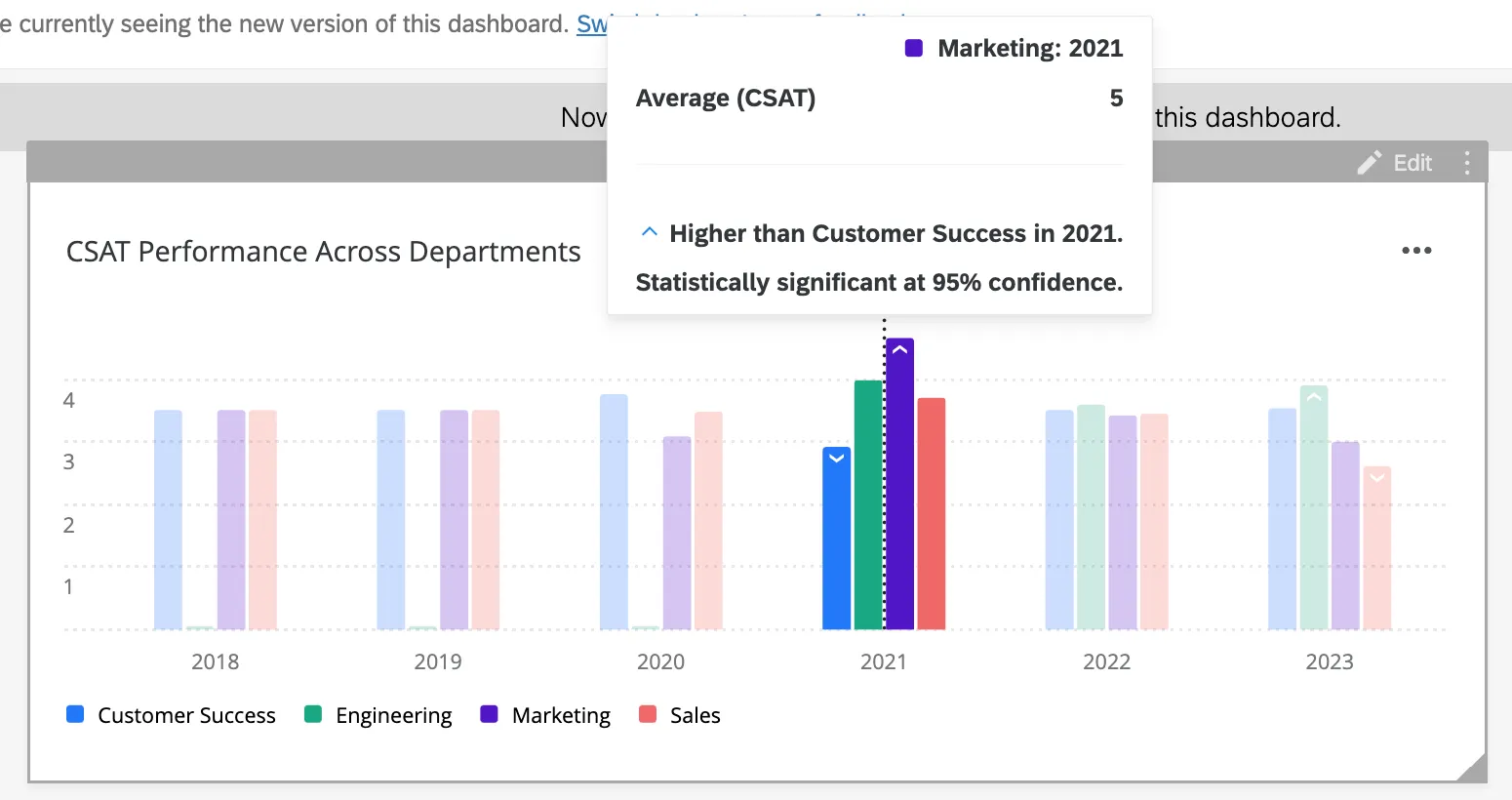

Example: This widget displays the average CSAT for different departments each year. The metric is Average CSAT, the x-axis is the date, and the data series is the department.

Selecting Compare current period to a previous time period compares significance across time periods for each distinct department.

Compare current value to another value can also be selected to compare significance across departments within each distinct time period.

Understanding Significance Testing

The Confidence Interval indicates how confident you would like to be that the results generated through the analysis match the general population. Higher confidence levels raise the threshold for a difference to be considered statistically significant, meaning only the clearest differences will be marked as such.

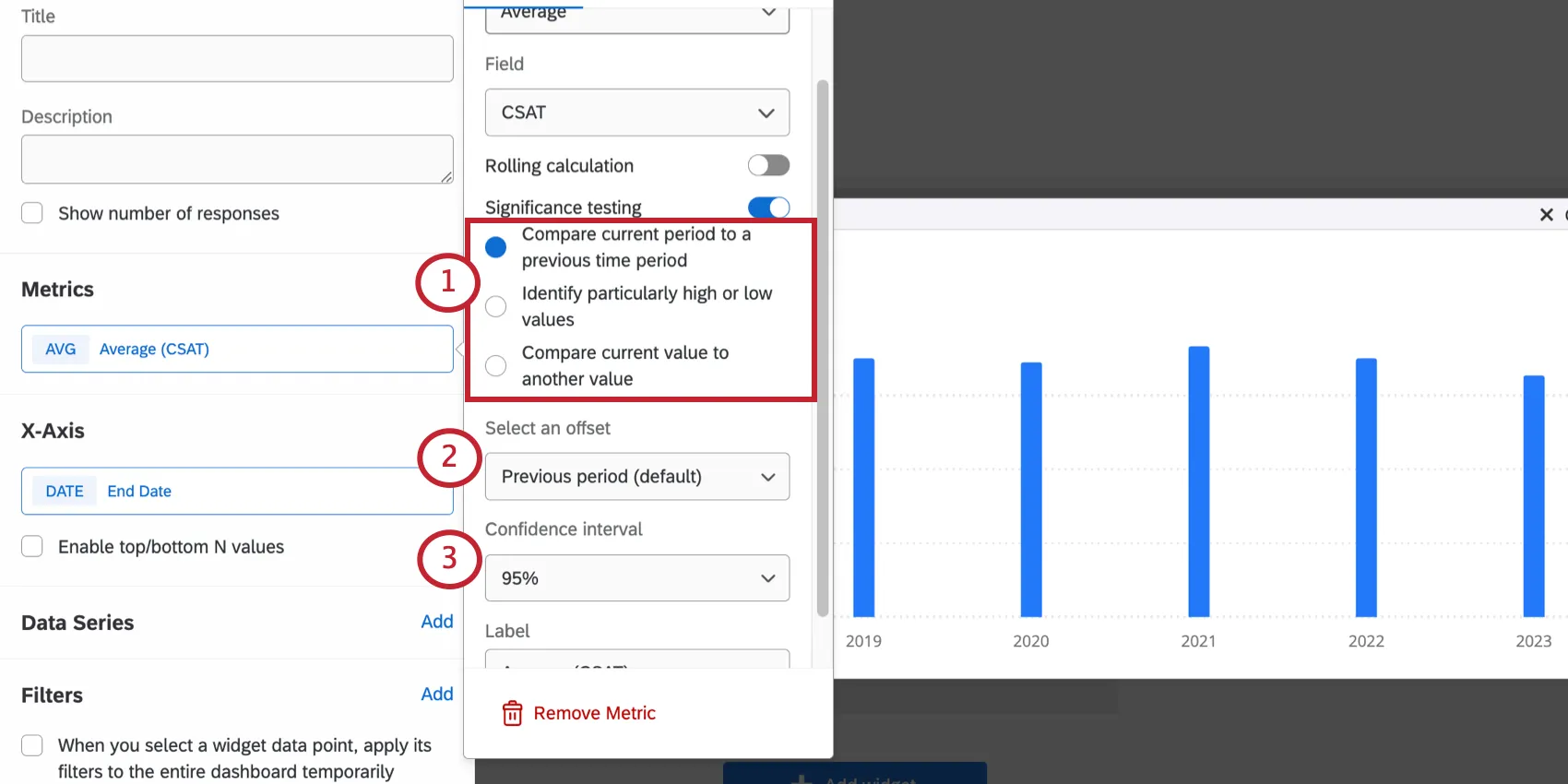

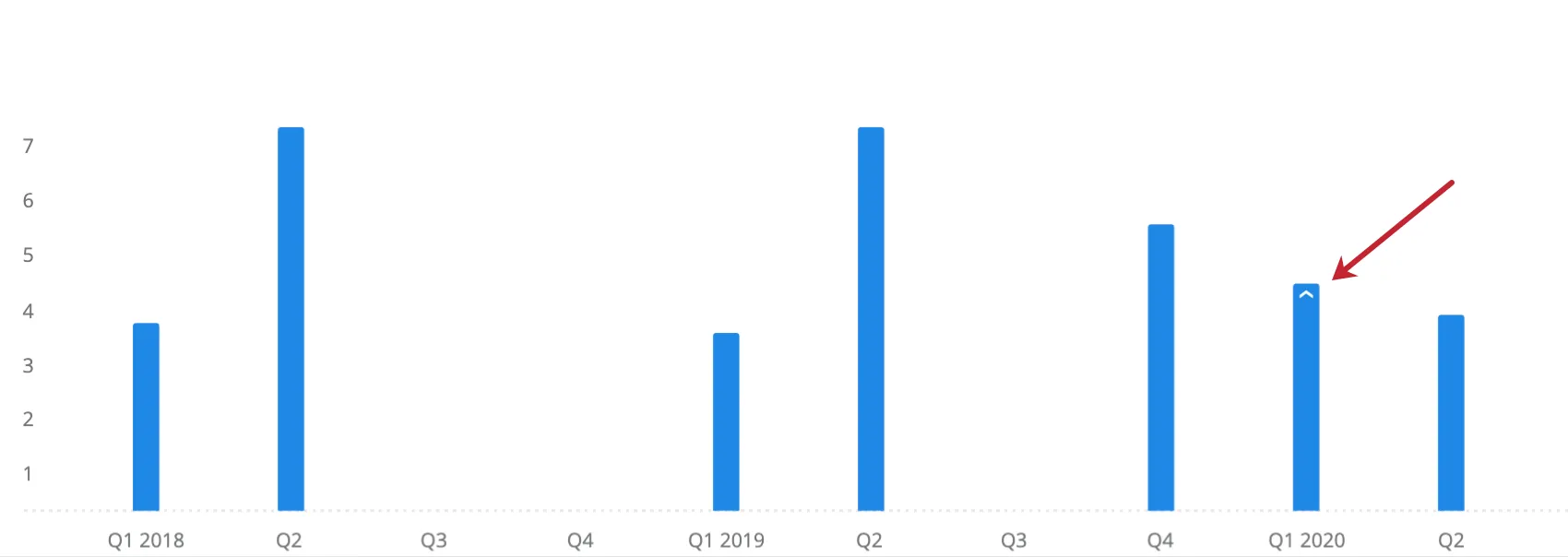

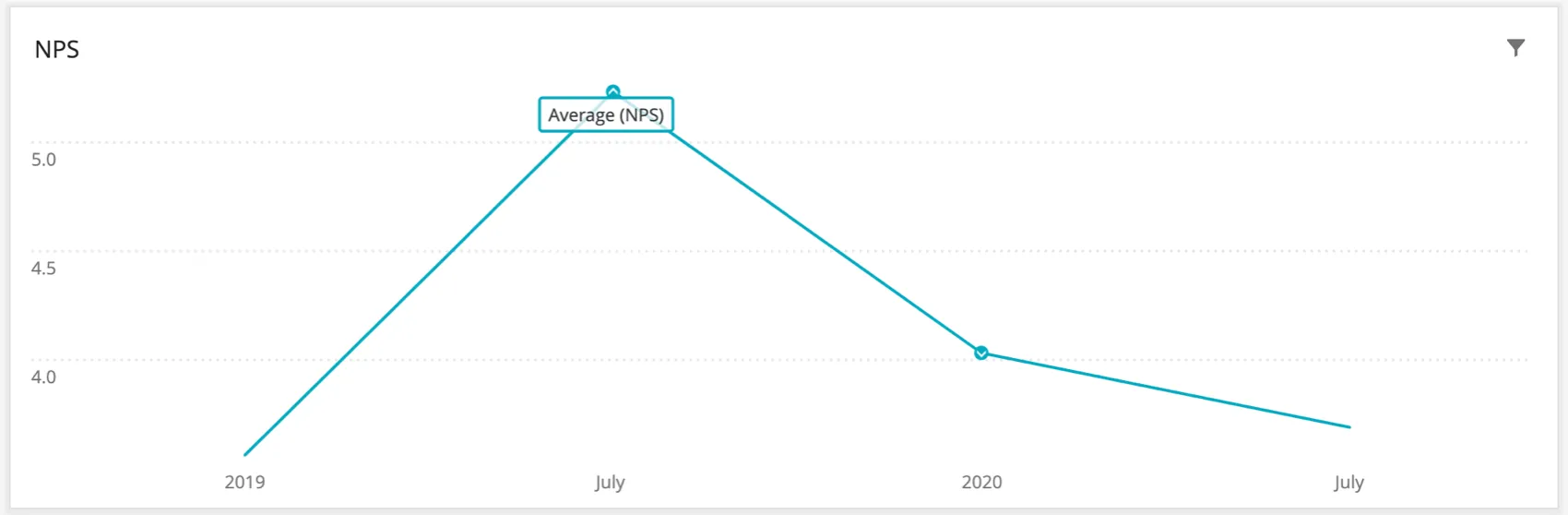

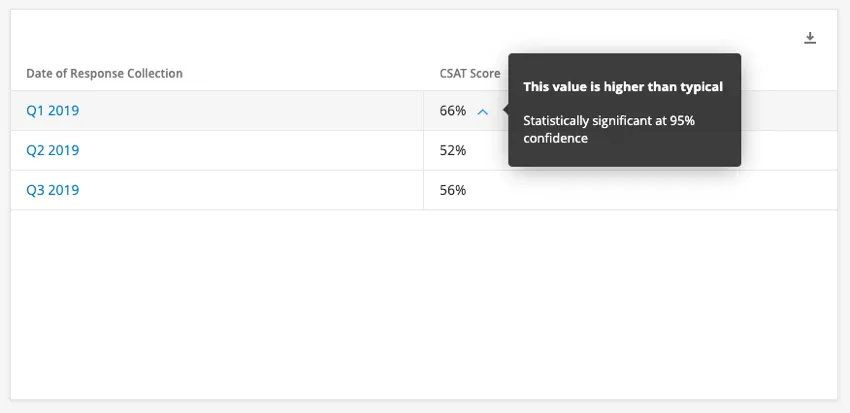

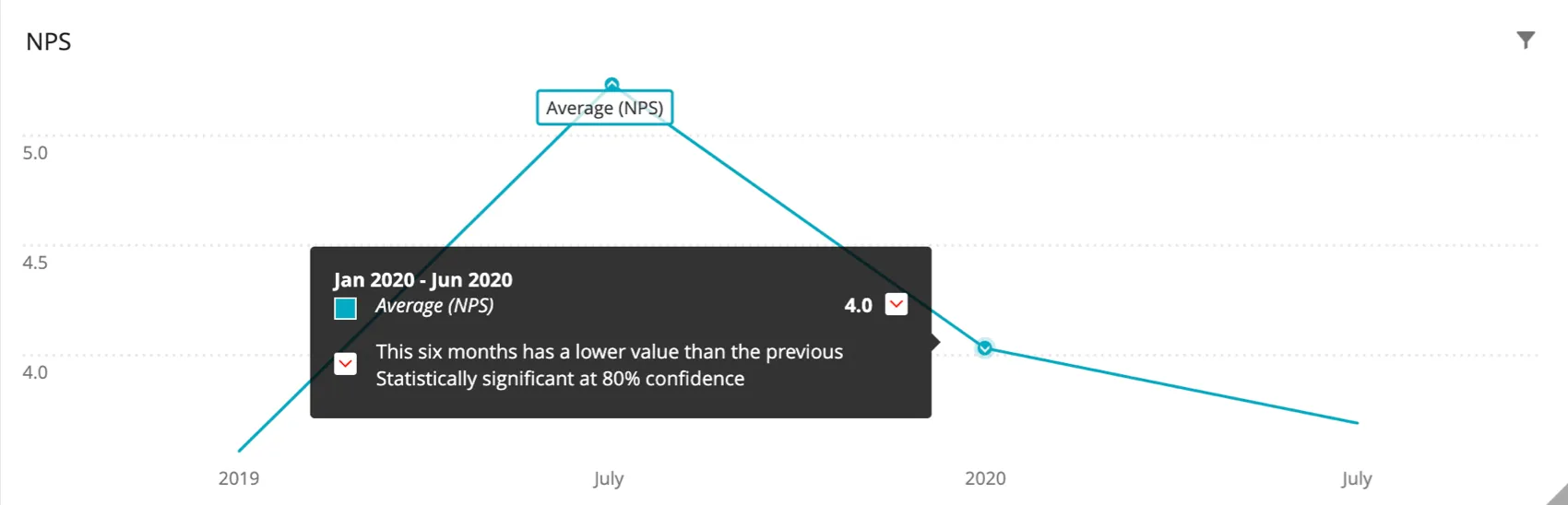

Once you have enabled significance testing, you might notice upwards and downwards arrows in your widget. These arrows indicate statistically significant values.

You can hover over an arrow to determine why the value is considered significant, and what the confidence interval of that test was.

Example: Here we hover over the blue arrow next to Q1 2019’s CSAT score. The tooltip tells us this value is higher than typical, and the confidence interval for this is 95%.

Example: Here we hover over the arrow over the January 2020 – June 2020 NPS score. The tooltip tells us this value for this six month period is lower than the previous six month period (July 2019 – December 2019), and the confidence interval for this is 80%.

Technical Notes on Significance Testing

When comparing one NPS score to another, regardless of chart type or type of comparison (e.g., over time), the following process is used:

When comparing one top box score to another, regardless of chart type or type of comparison (e.g., over time), the following process is used:

FAQs

Why are my metrics adding up to 99 or 101 instead of 100?

Why are my metrics adding up to 99 or 101 instead of 100?

33.60 + 33.60 + 32.80 = 100

Whereas if you choose to display no decimals with the same dataset:

34 + 34 + 33 = 101

Widgets can’t show infinite decimals, which means that regardless of decimal settings, some data will eventually have to be rounded up. This means that small deviations, like adding up to 99 or 101 instead of 100, work as intended.

That's great! Thank you for your feedback!

Thank you for your feedback!